The Growing Trend of AI: Sitting Down with Patricia Grabarek

June 26, 2019 in Diversity & Inclusion

By Joseph Sahili

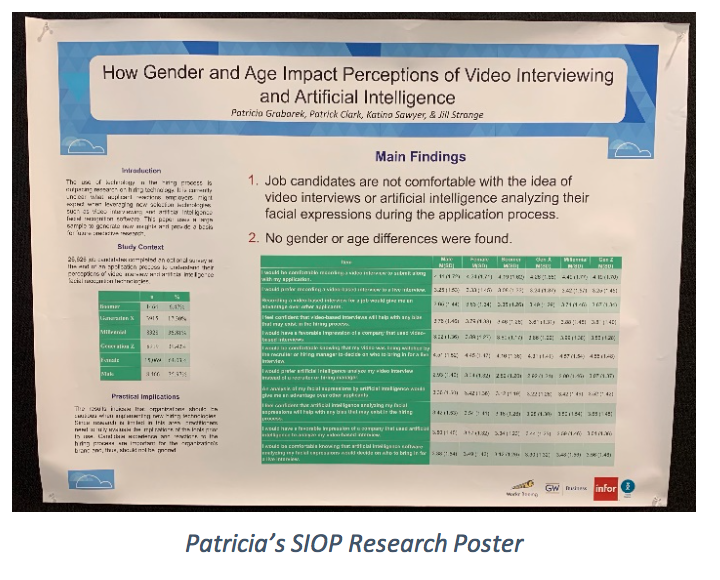

This week we have a special treat for you all, as FMP was able to interview the brilliant Patricia Grabarek on how the ever-growing trend to utilize Artificial Intelligence (AI) based selection tools impacts Diversity and Inclusion (D&I) outcomes! Patricia is a former FMPer and presented research at this year’s SIOP conference that was held right here in Washington D.C. Her research was presented on behalf of Infor, where her role as a Senior Behavioral Scientist revolves around supporting clients through the implementation and effective leveraging of predictive analytical solution tools that focus on human capital management. Holding an extensive background across all phases within talent selection processes, Patricia is about to drop some knowledge for all those interested in this growing practice! Check out some of the highlights from our interview with Patricia, where we inquire about how these tools will ultimately impact D&I efforts that are growing in today’s modern workforce.

You recently presented research at SIOP on behalf of the company you work for, Infor, and a wonderfully insightful blog that you manage called Workr Beeing. It involved the use of AI talent assessment tools that could read the facial expressions of prospective candidates. The focus of the study was to identify satisfaction levels amongst a variety of demographic factors (e.g., gender, age,). What were some of the major findings that were generated through this research?

“Interestingly, we found that regardless of age or gender, everyone felt wary of video and AI facial recognition technology used in the hiring process. In other work, we found that this also held true for race. The results indicate the people do not feel comfortable with new technologies. Surprisingly, they did not feel that AI would help reduce bias compared to recruiters or hiring managers reviewing videos.”

“While we did not delve into the reasons why in this study, we do have some theories. First, people are generally hesitant around new technologies, especially if they do not understand how they work. When it comes to hiring, people tend to be even more cautious since finding a job is of critical importance to many. Initially, we believed that individuals may feel that AI could be less biased than actual people, but recent press on these types of tools may have scared our participants of this technology. With reports on AI being built with our subconscious biases, people are cautious about the technology.”

“We believe our study points to individual differences in technology adoption that is often underestimated when considering implementation. We like to group people by age, gender, etc. when trying to understand perceptions and behavior but sometimes that does not matter.”

Do you believe that utilizing AI built talent assessment / selection tools can help mitigate the negative impact that unconscious biases can have in the hiring process?

“Not necessarily. It completely depends on how the tool is built. AI is created by humans with biases. We are teaching the system how to think. And we are learning now that removing bias is not simple. I believe if we are being very careful and cognizant of the potential for bias, we can help reduce it.”

“That being said, AI is a very broad term. If you are teaching a system to read faces, we’ve seen that we often use white faces as the standard, and it creates a lot of error in interpreting other faces. But AI is also used in algorithmic analyses. For example, at Infor, we are using AI to help us analyze large quantities of data. This is a completely different use of AI. If you are using AI to help you make smarter statistical decisions or to run millions of analyses in a quick and efficient way, bias does not come into play. If you are building predictive models, you can reduce bias as long as your data doesn’t have bias inherently in it. If you are using zip code to predict performance, clearly you will run into issues. If you use other data like behavioral preference, you can predict performance in a non-biased way. If you are teaching it to be a fantastic statistician using metrics that are not tied to race, gender, or age, it can be extremely helpful in building strong research-based selection tools. If you are teaching it to read faces, you face many more challenges in removing bias.”

What do you believe would be the perceived benefits and risks that an organization could obtain by implementing such an AI based talent assessment / selection tool as it relates to D&I best practices?

“Legally and ethically, we need to have selection systems that fairly select candidates. AI can help when building predictive models with good, thoughtful data (as described above). If you are using strong metrics to help predict who will be a good fit to a job and a good performer, AI can help increase diversity (we have a white paper at Infor on this – view the end of this blog to check it out!). You are taking out the human element when reviewing resumes, etc. and starting with the prediction to know who to focus on.”

“However, as stated before, video interviews using AI for facial recognition can actually disadvantage minority groups that were not used in the initial AI training. Additionally, if you use poor data in predicting performance (the zip code thing is a real-life example unfortunately), you will build in bias into your prediction. Large tech companies have recently realized that taking things like college ranking into account can hurt diversity dramatically. Top colleges are often extremely expensive and there’s inherent societal issues that make it more challenging for certain groups to get to those schools than others. You can teach an AI system to screen for school rank, resulting in biased candidate rankings.”

There can always be room for improvement, and I wonder how effective the current tools are that exist in the market today. What challenges do you believe this technology still faces and must resolve in order to effectively adhere to D&I best practices?

“We still have so much to learn about AI. It’s buzzy and everyone wants in on it, but we know very little. Outside of data analysis and building predictive models, there hasn’t been enough research to really understand how effective AI can be and how it impacts diversity.”

“As with all new technology, the challenge is to have enough research support prior to implementation. Yet business doesn’t work that way. I would caution all organizations to take a step back before implementing a new tool and think through the possible consequences. Has the tool been vetted for adverse impact? What evidence is there that it is predictive of performance on the job? If the tool is brand new, there is likely little information on how it works. While I love new technology and the exciting things that we can do, I would never want to be a first client or first adopter for a new AI tool in selection.”

Your blog Workr Beeingconsistently provides best practice ideas and research related to employee wellness. This is another growing topic within the world of Human Capital Management considering the bevy of positive research findings related to both the workforce and organizations that advocate for an enhanced employee experience. Do you believe that employee wellness overlaps in any way with D&I, and if so, how?

“YES! Inclusive work environments tend to breed healthy work environments. Research shows that being able to be authentic in the workplace is associated with reduced stress. People want to be authentic and feel better at work when they are. This includes all forms of diversity from race, gender, sexual orientation, to even something silly as their love for Star Wars. Being able to express yourself at work in a safe environment leads to happier and healthier employees.”

“Inclusive work environments are also psychologically safe. Psychological safety allows employees to be comfortable speaking up and not be punished for it. It allows for diversity of thought and experience to be expressed in a safe and meaningful way.”

“Inclusive work environments are also very respectful. Everyone benefits when they feel valued and respected at work. Going into a work environment where you don’t feel that respect breeds stress, disengagement, and low performance. Employees want to feel valued!”

Well there you have it folks! AI technology isn’t going anywhere anytime soon, though it would be of the upmost benefit to an organization that chooses to implement these kinds of tools that they do their research first. When it comes to analyzing vast data sets and identifying predictive outcomes, it all comes down to having biased free information if you want to play to the strengths of this technology. What we must be wary of then is that when we teach a computer to interpret non-numerical data, we do so in a way that reflects our own cognitive processes, which have inherent biases. When done correctly, organizations can make effective decisions in identifying high performers within a diverse and vast talent pool. This in turn is just one aspect of building towards a D&I work culture in which employees feel valued and respected when acting authentically. I’d like to give a special shout-out to Patricia for making this blog a reality, fight on Trojans!

Sources for more information:

Infor white paper on how AI can help increase diversity – https://pages.infor.com/hfe-white-paper-talent-science-diversity-employee-assessment-selection.html?cid=NA-NA-HCA-US-HCM-1115-FY16-HCM-Assets-WWIW-38208

Workr Beeing blog homepage – https://workrbeeing.com/

Mercer’s report on D&I technologies – https://info.mercer.com/DandItech